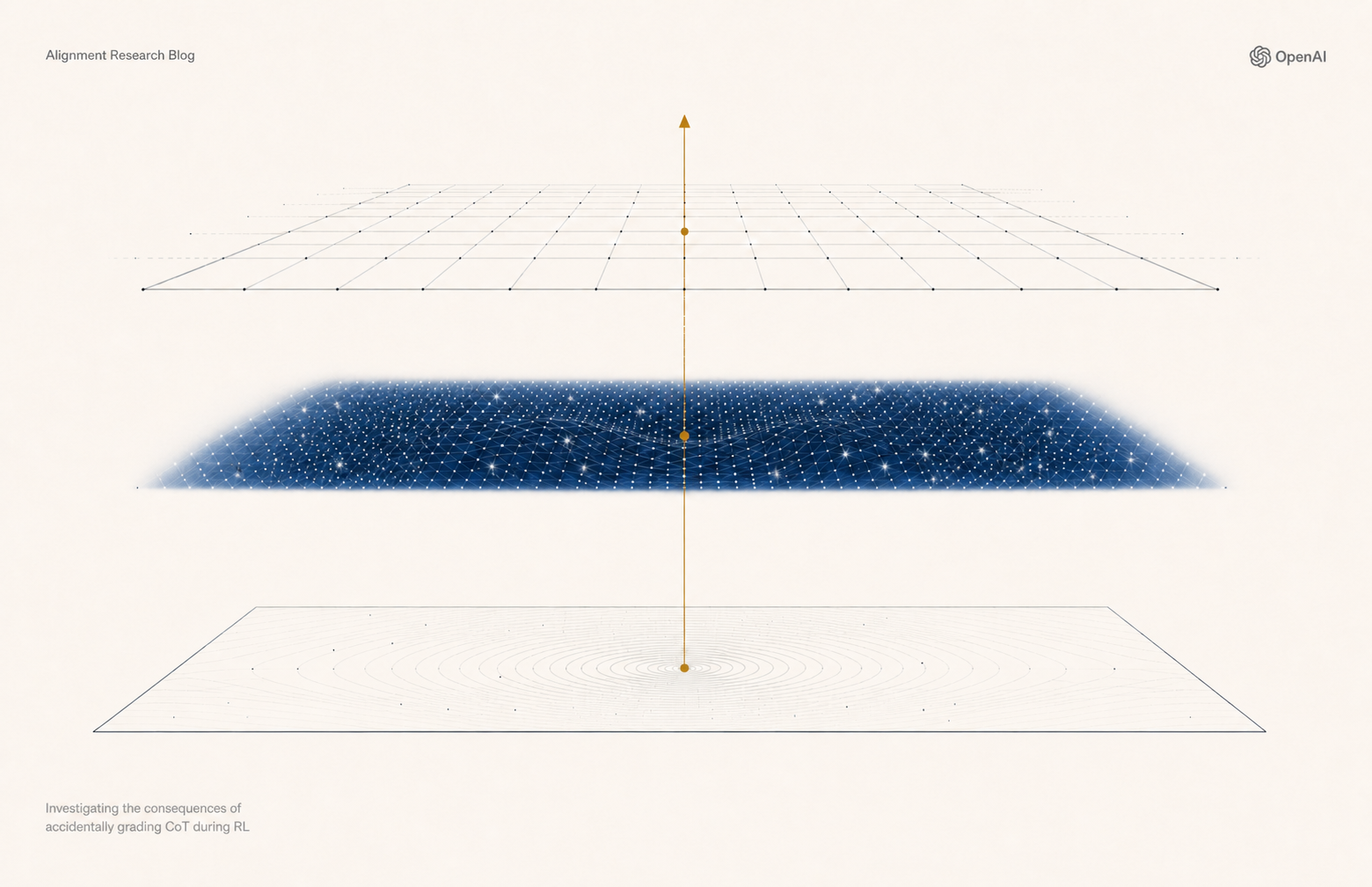

Accidental CoT Grading Analysis

Summary

In 📖 Scripture & Skills 🎮 this announcement summarizes OpenAIs analysis of limited accidental Chain-of-Thought grading during RL. It explains fixes to affected reward pathways, reports no clear evidence of degraded monitorability, and links to the full report so the community can understand implications for AI safety and transparency.

@OpenAI Announcements Chain of thought monitors are a key layer of defense against AI agent misalignment. To preserve monitorability, we avoid penalizing misaligned reasoning during RL.

We found a limited amount of accidental CoT grading which affected released models, and are sharing our analysis.

https://alignment.openai.com/accidental-cot-grading/

Investigating the consequences of accidentally grading CoT during RL

We found limited accidental CoT grading in some released models, fixed the affected reward pathways, and found no clear evidence that monitorability degraded.